What is P-hacking?

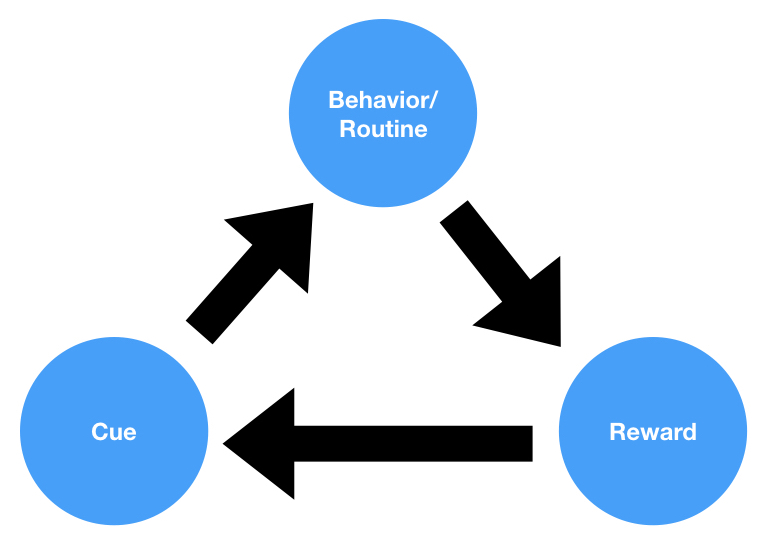

P-hacking is the practice of manipulating data analysis to achieve a statistically significant p-value, typically through selective reporting of outcomes, optional stopping (analyzing data as it comes in and stopping when significance is reached), or trying multiple statistical tests until one produces p

How it works

Simmons et al. (2011) demonstrated that even minor analytic flexibility (choosing which variables to control for, whether to include outliers, when to stop collecting data) can produce false positive rates of 60% or higher, far above the nominal 5%. P-hacking is often unintentional: researchers explore their data looking for ‘what the story is’ without realizing that each analytical choice inflates the false positive rate. Pre-registration and multiverse analysis are primary countermeasures.

Applied example

A researcher collects data on five outcome measures, analyzes each with and without two potential covariates, and includes or excludes borderline outliers. This creates 5 x 3 x 2 = 30 possible analyses. By chance alone, at least one will likely be significant at p

Why it matters

P-hacking is a primary contributor to the replication crisis, producing a published literature where many statistically significant findings are artifacts of analytic flexibility rather than genuine effects.