I’ve got some bad news.

Behavioral economics is dead.

Yes, it’s still being taught.

Yes, it’s still being researched by academics around the world.

Yes, it’s still being used by practitioners and government officials across the globe.

It sure does look alive… but it’s a zombie—inside and out.

Why do I say this?

Two primary reasons:

-

Core behavioral economics findings have been failing to replicate for several years, and *the* core finding of behavioral economics, loss aversion, is on ever more shaky ground.

-

Its interventions are surprisingly weak in practice.

Because of these two things, I don’t think that behavioral economics will be a respected and widely used field 10-15 years from now.

If you’re interested in making applied behavioral science your career, I would strongly encourage you to stay clear of behavioral economics.

If you’re someone who works at a company, I strongly encourage you to work with someone who is not solely reliant on the field.

If you’re simply interested in learning more about human behavior and the brain, I would steer clear of the field altogether.

Let’s cover each point in more depth:

1. Core findings are failing to replicate

The last few years have been particularly bad for behavioral economics. A number of frequently cited findings have failed to replicate.

Here are a couple of high profile examples:

-

The Identifiable Victim Effect (featured in the workbooks I wrote with Dan Ariely and Kristen Berman in 2014)

-

Priming (featured in Nudge, Cialdini’s books, and Kahneman’s Thinking Fast and Slow)

But the biggest replication failures relate to the field’s most important idea: loss aversion.

To be honest, this was a finding that I lost faith in well before the most recent revelations (from 2018-2020). Why? Because I’ve run studies looking at its impact in the real world—especially in marketing campaigns.

If you read anything about this body of research, you’ll get the idea that losses are such powerful motivators that they’ll turn otherwise uninterested customers into enthusiastic purchasers.

The truth of the matter is that losses and benefits are equally effective in driving conversion. In fact, in many circumstances, losses are actually *worse* at driving results.

Why?

Because loss-focused messaging often comes across as gimmicky and spammy. It makes you, the advertiser, look desperate. It makes you seem untrustworthy, and trust is the foundation of sales, conversion, and retention.

“So is loss aversion completely bogus?”

Not quite.

It turns out that loss aversion does exist, but only for large losses. This makes sense. We *should* be particularly wary of decisions that can wipe us out. That’s not a so-called “cognitive bias”. It’s not irrational. In fact, it’s completely sensical. If a decision can destroy you and/or your family, it’s sane to be cautious.

“So when did we discover that loss aversion exists only for large losses?”

Well, actually, it looks like Kahneman and Tversky, winners of the Nobel Prize in Economics, knew about this unfortunate fact when they were developing Prospect Theory—their grand theory with loss aversion at its center. Unfortunately, the findings rebutting their view of loss aversion were carefully omitted from their papers, and other findings that went against their model were misrepresented so that they would instead support their pet theory. In short: any data that didn’t fit Prospect Theory was dismissed or distorted.

I don’t know what you’d call this behavior… but it’s not science.

This shady behavior by the two titans of the field was brought to light in a paper published in 2018: “Acceptable Losses: The Debatable Origins of Loss Aversion“.

I encourage you to read the paper. It’s shocking. This line from the abstract sums things up pretty well: “…the early studies of utility functions have shown that while very large losses are overweighted, smaller losses are often not. In addition, the findings of some of these studies have been systematically misrepresented to reflect loss aversion, though they did not find it.”

When the two biggest scientists in your field are accused of “systemic misrepresentation”, you know you’ve got a serious problem.

Which leads us to another paper, published in 2018, entitled “The Loss of Loss Aversion: Will It Loom Larger Than Its Gain?“.

The paper’s authors did a comprehensive review of the loss aversion literature and came to the following conclusion: “current evidence does not support that losses, on balance, tend to be any more impactful than gains.”

Yikes.

But given the questionable origins of the field, it’s not surprising that its foundational finding is *also* dubious.

If loss aversion can’t be trusted, then no other idea in the field can be trusted.

Which leads us to our next point…

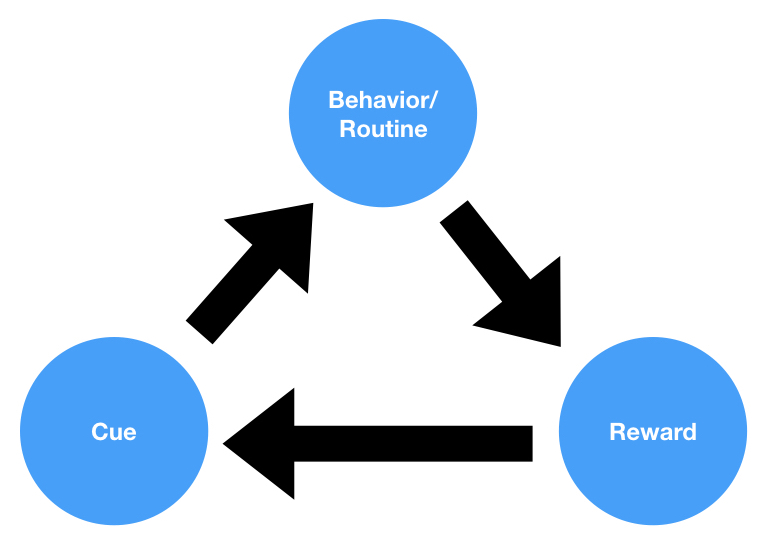

2. Behavioral economics interventions are surprisingly weak in practice.

For a number of years, I’ve been beating the anti-nudge drum. Since 2011, I’ve been running behavioral experiments in the wild, and have always been struck by how weak nudges tend to be.

In my experience, nudges usually fail to have *any* recognizable impact at all.

This is supported by a paper that was recently published by a couple of researchers from UC Berkeley. They looked at the results of 126 randomized controlled trials run by two “nudge units” here in the United States.

I want you to guess how large of an impact these nudges had on average…

30%?

20%?

10%?

5%?

3%?

1.5%?

1%?

0%?

If you said 1.5%, you’d be right*.

According to the academic papers these nudges were based upon, these nudges should have had an average impact of 8.7%. But, as you probably understand by now, behavioral economics is not a particularly trustworthy field.

I actually emailed the authors of this paper, and they thought the ~1% effect size of these interventions was something to be applauded—especially if the intervention was cheap & easy.

Unfortunately, no intervention is truly cheap or easy. Every single intervention requires, at the very minimum, administrative overhead. If you’re going to do something, you need someone (or some system) to implement and keep track of it. If an intervention is only going to get you a 1% improvement, it’s probably not even worth it. To be honest, you can probably use your creativity to brainstorm an idea that will get you a 3-4% minimum gain, no behavioral economics “science” required.

Which leads me to the final point I’d like to make: rules and generalizations are overrated.

The reason that fields like behavioral economics are so seductive is because they promise people easy, cookie-cutter solutions to complicated problems.

Figuring out how to increase sales of your product is hard. You need to figure out which variables are responsible for the lackluster interest.

Is the price the issue? Is the product too hard to use? Is the design tacky? Is the sales organization incompetent? Is the refund/return policy lacking? etc.

Exploring these questions can take months (or years) of hard work, and there’s no guarantee that you’ll succeed.

If, however, a behavioral economist tells you that there are nudges that will increase your sales by 10%, 20%, or 30% without much effort on your part…

Whoa. That’s pretty cool. It’s salvation.

Thus, it’s no surprise that governments and companies have spent hundreds of millions of dollars on behavioral “nudge” units.

Unfortunately, as we’ve seen, these nudges are woefully ineffective.

Specific problems require specific solutions. They don’t require boilerplate solutions based on general principles that someone discovered by studying a bunch of 19 year old college students.

However, the social sciences have done a good job of convincing people that general principles are better solutions for problems than creative, situation-specific solutions.

In my experience, creative solutions that are tailor-made for the situation at hand *always* perform better than generic solutions based on one study or another.

This is because almost every lab-based study omits one key variable, which I’ll call “situational fit”. In a sense that’s the point of the scientific process: to control for every variable except for the one you’re interested in—to find effects that generalize across contexts. However, humans are not billiard balls or hydrogen atoms. We’re remarkably complex and will react to the same stimuli in quite different ways depending on the circumstances. This is one of the fundamental flaws of lab based behavioral science research. It puts humans in bizarre contexts and assumes that their behavior in an unnatural setting will generalize to complex, natural settings. This happens to (almost) never be the case. Just because people are affected one way in a lab at UC Berkeley doesn’t mean they’ll be impacted the same way when sitting at home on the couch or while chatting with friends at the bar.

The fact that pretty much no one in the behavioral sciences talks about this is astounding, and I believe it’s one of the many reasons that we have a replication crisis in the behavioral sciences (along with publication bias, bad stats, improper incentives, etc).

In my experience, the best behavior change interventions come when creativity is informed by a scientific understanding of behavior—resulting in elegant, situation-specific solutions. General principles are a nice starting point for one’s brainstorming, but should not be where one ends.

Currently, applied behavioral science is where creativity goes to die. This is a shame, since the best behavioral scientists I’ve ever worked with, including Dan Ariely, are creative geniuses first and behavioral scientists second.

If we’re going to save the field, we need to get away from one-size-fits-all nudges and embrace creativity. If we don’t, a behavioral economics PhD won’t be worth the paper it’s printed on, and the applied behavioral sciences will go the way of the Dodo instead of Davos.

*The actual number is 1.4%, but if I had written that out you would have chosen it because of its specificity.