What is Statistical power?

Statistical power is the probability that a study will detect a true effect if one exists. Low power means a high risk of false negatives: concluding there is no effect when there actually is one.

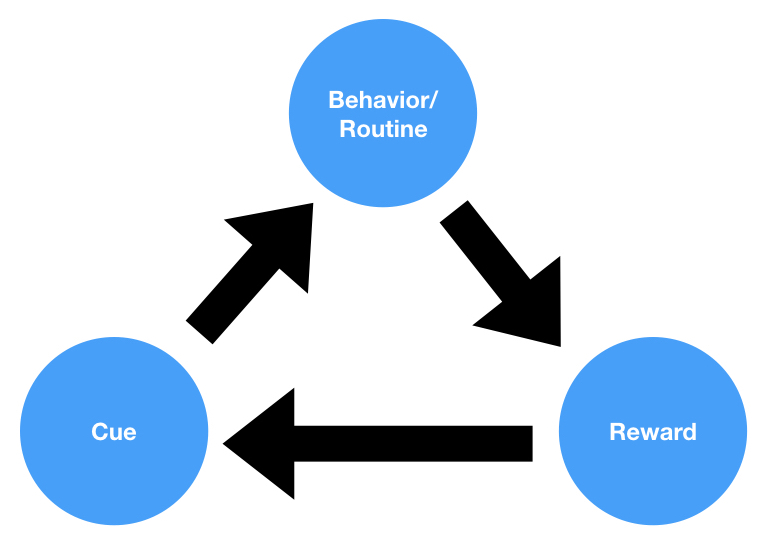

How it works

Power depends on three factors: sample size (larger samples have more power), effect size (larger effects are easier to detect), and significance level (stricter thresholds require more power). A study with 80% power (the conventional standard) has a 20% chance of missing a real effect. Button et al. (2013) found that the median power of neuroscience studies was just 21%, meaning most studies were likely to miss real effects. Underpowered studies that do find significant results tend to produce inflated effect sizes (the winner’s curse).

Applied example

A clinical trial with 30 participants per group has roughly 30% power to detect a small-medium effect. If the treatment genuinely works, the study has a 70% chance of concluding it does not. The company may abandon an effective treatment based on an underpowered study.

Why it matters

Statistical power is the often-neglected determinant of research quality: without adequate power, both positive and negative findings are unreliable, wasting resources and misleading practice.